Byflipboarddev

Bringing the beauty of print to the mobile interface is our all-encompassing vision at Flipboard; in doing so, we’ve learned that it’s necessary to provide our users with an experience dedicated solely to their content. With powerful magazine and topical recommendations, we’ve nearly perfected the way our users find stories, but never before, until now, have we tinkered with how our users read them.

Why Flipboard Needs Summarization

At Flipboard, we’re known for our polished and beautiful dynamic layouts. Constructing these layouts is a challenge; with a user base fragmented across iPhone, iPad, Android, and Windows, it’s important for us to optimize our content appropriately to support varying screen sizes and content layouts. Solving for these size constraints becomes much easier when we can emphasize the key parts of content and filter out the less important.Sentence graphs in Summarization

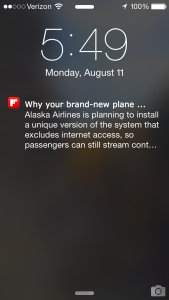

Our focus has been on extractive summarization. In extractive summarization, the objective is to identify the essential, or central, sentences in a document. One way of modeling a document is as a graph, with each sentence of the document represented with a node and the relationships between those sentences represented with weighted edges. We model sentences as bags of words, and the strength of interaction between two sentences as being the similarity between their respective word-sets. There are several standard metrics for this, such as Jaccard similarity and Hamming distance. Having selected a metric, we then normalize the edge weights such that the out-degree of each node sums to one.

The normalized adjacency matrix of the graph is, thus, stochastic. Given that, we can consider the centrality of nodes (sentences) in the graph; in particular, we can compute the PageRank centrality measure for each sentence in the document. Higher scoring sentences are more central and more typical of the document.1

We then sort the sentences by their scores, select the top n (depending on the amount of space available on-screen for the summary), and reorder them by their order of appearance in the original document.

All of this work can be done very quickly. Our Java implementation running on an AWS EC2 instance measured at an average of 16ms over 50,000 documents, with a standard deviation of 48ms. The 95th percentile measurement was 65ms.

We model sentences as bags of words, and the strength of interaction between two sentences as being the similarity between their respective word-sets. There are several standard metrics for this, such as Jaccard similarity and Hamming distance. Having selected a metric, we then normalize the edge weights such that the out-degree of each node sums to one.

The normalized adjacency matrix of the graph is, thus, stochastic. Given that, we can consider the centrality of nodes (sentences) in the graph; in particular, we can compute the PageRank centrality measure for each sentence in the document. Higher scoring sentences are more central and more typical of the document.1

We then sort the sentences by their scores, select the top n (depending on the amount of space available on-screen for the summary), and reorder them by their order of appearance in the original document.

All of this work can be done very quickly. Our Java implementation running on an AWS EC2 instance measured at an average of 16ms over 50,000 documents, with a standard deviation of 48ms. The 95th percentile measurement was 65ms.

Summarization in Action

As an example, here’s a summary of this blog post about Star Wars: Summary:George Lucas did write Star Wars, and his art and memorabilia collections will be housed in his Museum of Narrative Art in the Windy City. In honor of the Museum of Narrative Art and its star-studded cast of architects, here's a roundup of articles from Architizer that feature Star Wars-related architecture: Jeff Bennett's Wars on Kinkade are hilarious paintings that ravage the peaceful landscapes of Thomas Kinkade with the brutal destruction of Star Wars.

Someday I will have a place to put all my collections. It will most likely be my basement, or a little corner of my basement. But I didn't write Star Wars. If I had, I might be able to build a museum on the sparkling lakefront of Chicago, right next to Soldier Field. George Lucas did write Star Wars, and his art and memorabilia collections will be housed in his Museum of Narrative Art in the Windy City.

Alaska Airlines is planning to install a unique version of the system that excludes internet access, so passengers can still stream content on routes without coverage. If your plane is connected to the web, you'll also have access to streaming television content. Like Gogo, Global Eagle can stream content to customers on planes that aren't connected to the web. That satellite system could also enable the company to offer live TV, like the Global Eagle service you'll find on Southwest's planes, but Gogo's next-generation infrastructure is still a few years out.

Earlier this year, I boarded a United flight from Newark to San Diego. After passing the first few rows, a young boy turned to his mother and asked, "Why aren't there any TVs?" "It's probably an older plane," she responded -- but that couldn't be further from the truth. The aircraft, a 737-900 with Boeing's Sky Interior (a Dreamliner-esque recessed ceiling lit with blue LEDs), had only been flying for a few weeks. It looked new, and it even had that "new plane smell" most passengers would only associate with a factory-fresh auto.

The Future

While a combination of the central parts of content serves as a great guess of what’s important to a reader, it isn’t perfect, because importance is naturally subjective; while a culinary novice might be looking for a basic ingredients list from an article about mushroom risotto, a more experienced chef might be looking to learn new techniques from the same article. Putting user-specific data to work in creating personalized summaries is something we are very interested in and are excited to explore. We’re looking forward to rolling out summarization across Flipboard in the coming months. We’re always looking for ways to improve Flipboard through tackling interesting problems like summarization. If working on NLP or data-related projects is interesting to you, we’re hiring.- This approach was developed by Erkan and collaborators in their 2004 paper: LexRank: Graph-based Lexical Centrality as Salience in Text Summarization ↩